Saturday, February 20th, 2010

The SPIE’s 7th Frits Zernike Award for Advances in Optical Microlithography goes to M. David Levenson, BetaSights Litho & DFM Editor, in recognition of one of the most important developments in lithography resolution enhancement of the last twenty years, the phase shifting mask (PSM). About 30 years ago at the IBM San Jose Lab, Levenson conceived the alternating PSM (alt-PSM).

UPDATE: In presentation #7641-27 of SPIE’s DFM session, Richard Schenker said Intel has now made over ONE BILLION chips using alt-PSM, starting at the 180nm node (HVM about ten years ago), for gate and for contact layers (the latter with sub-resolution assist features). –M.D.L., February 26th

Dr. Marc David Levenson graduated from MIT in 1967, received his PhD degree from Stanford University in 1972, and post-doc’d at Harvard. He later became an Associate Professor of Physics and Electrical Engineering at USC in Los Angeles before joining the IBM San Jose Research Laboratory in 1979, where he worked on laser applications in science and technology, including quantum optics. Best known in science for his book “Introduction to Nonlinear Laser Spectroscopy,” in technology he is famous for originating the PSM. During the retrenchment at IBM in 1993, he left to help form Focused Research (a div. of New Focus, Inc.). Later, he held visiting positions at JILA (U. of Colorado at Boulder) and Rice U. in Houston, Texas. Until one year ago he was Editor-in-Chief of Microlithography World Magazine and he remains proprietor of M.D. Levenson Consulting as well as Litho and DFM Editor at BetaSights.net. He is a Fellow of IEEE, OSA, and APS, and a member of SPIE and National Academy of Engineering.

BetaSights founder and editor Ed Korczynski posed the following questions about physics, lithography, and what it’s like to try to lead the world through both metaphorical and literal darkness.

When did you first decide to become a physicist, and was there a particular teacher/mentor who sparked your interest?

I was probably always interested in science, but began focusing on physics in junior high. Partly it was the influence of Scientific American, which my father subscribed to, and partly it was the availability of cool cheap stuff from Edmund Scientific. I remember Sputnik and hearing its beep on a short-wave radio…that changed things. There also was the fact that my chemistry set experiments tended to produce disastrous results!

I was probably always interested in science, but began focusing on physics in junior high. Partly it was the influence of Scientific American, which my father subscribed to, and partly it was the availability of cool cheap stuff from Edmund Scientific. I remember Sputnik and hearing its beep on a short-wave radio…that changed things. There also was the fact that my chemistry set experiments tended to produce disastrous results!

I don’t recall any outstanding teachers in West Virginia in my early years. The junior high science teacher decided we needed to do better on the Achievement Test, so he gave us the answers. I realized that, if I was going to learn anything, I would have to teach myself.

The laser fascinated me from the beginning and I remember going to an IRE meeting in Pittsburgh with my father, where some lecturer spoke about the earliest discoveries. When I got to MIT, there was an open-cavity HeNe laser in the freshman lab (and a Spectra Physics black-box laser to line it up). I learned to make it work and fiddled with the modes and diffraction patterns.

Unfortunately, when I needed a job as an undergraduate, the laser group had nothing. So, I got a job at the Laboratory for Nuclear Science and built equipment for particle physics experiments at the Cambridge Electron Accelerator. The CEA had blown up just before my job was to start and we had to do a lot of rebuilding before we could run anything. Still, it was a wonderful period. I did my senior dissertation in particle physics and had access to more resources as an undergraduate at LNS in the mid-‘60s than I ever had again!

Had you been working on phase-shift phenomena in general while you were a tenured professor at USC, and was that why IBM Almaden Research recruited you?

I was working there on multiphoton laser spectroscopy and four wave mixing, not phase-shift. I lost a series of academic cat fights and began looking around when I realized that lots of people had lifetime tenure at institutions like San Quentin, but would give it up for a decent opportunity.

There were rumors of two good opportunities for a laser spectroscopist, which turned into offers: one at NIST in Gaithersburg and one at IBM in San Jose. I had been a summer student at IBM and my wife had connections at SRI and we both loved the weather here, so I chose IBM. In retrospect, it was a mistake. The project was in data storage, the photochemical hole burning memory. I thought I knew something useful about that technology, but it turned out that what I needed to know was to keep my mouth shut!

When you were started the R&D, did you know of anyone else working on phase-shift approaches to microlithography?

I didn’t know of anyone working on phase-shift at the time. However, it has turned out that Masato Shibuya at Nikon was thinking about the same things at the same time. He was in pure stealth-mode, though! I later found out that he had visited Fairchild just down the road from IBM after I had published the first paper, and was asked about the idea, but said nothing!

Prof. Hank Smith and his students at MIT had also thought about phase shift in the context of X-ray proximity but their idea was different from mine and more closely related to attenuated-PSM, which was ultimately proposed by Burn Lin.

There had been different applications of phase-shifting plates in holographic data storage that were turned up by the patent attorneys later. I didn’t know about any of them. Today, they use gigantic plates like that to homogenize the laser beams at the National Ignition Facility in Livermore!

What has surprised you in the timing or manner of PSM use in industry since the invention?

I am amazed that it took over 25 years for the alt-PSM to be adopted here. There just was so much resistance, and not all of it from IBM management! Hitachi put an Alternating PSM on the cover of their annual report in 1989 and no one in the U.S. did anything. Even now, there are very few alt-PSMs being built in the world. My former company, IBM, never did succeed in using it to produce high-yielding chips, although they began making thin film heads with alt-PSMs soon after I left. There never was adequate R&D support for alt-PSMs and now it is too late.

I am amazed that it took over 25 years for the alt-PSM to be adopted here. There just was so much resistance, and not all of it from IBM management! Hitachi put an Alternating PSM on the cover of their annual report in 1989 and no one in the U.S. did anything. Even now, there are very few alt-PSMs being built in the world. My former company, IBM, never did succeed in using it to produce high-yielding chips, although they began making thin film heads with alt-PSMs soon after I left. There never was adequate R&D support for alt-PSMs and now it is too late.

On the other hand, the attenuating-PSM was adopted rather quickly, even before the materials problems were really addressed seriously. I had been told by the “experts” at IBM that depositing and etching uniform films with specified attenuation and phase-shift was essentially impossible. That may actually have been true, but the technology worked anyway! Now, of course, the neglect is showing itself as polarization issues come to the fore.

You continue to consult on optical microlithography technology development; can you tell us (in general) about some areas of interesting work you’ve done in the last 10 years?

The 21st century hasn’t been so great so far! Lately, I have actually been trying to go back to laser spectroscopy and quantum optics. However, I did get to collaborate with some wonderful people at DNP, Canon, Applied Materials and KLA-Tencor on phase-shift related ideas, most of which were not applied.

applied.

There was the Vortex mask that would have printed the finest possible pattern of vias, if negative resist were available. It was at KrF wavelength, but not really at ArF, even now. If you print those tiny dark spots in positive ArF resists, you get little posts, which all fall down! I think IMEC is still working on that using our mask….

We also did double patterning using the LELE and LPLE schemes at the Mayden Center in 2003 using alt-PSMs. We broke the k1=0.25 barrier using available materials, but at KrF wavelength, which meant the line/space half pitch was 70nm. Of course, everyone was on to ArF by then and the double patterning technology wasn’t yet ready at 193nm. It still isn’t!

Do you have any official opinions about the likely usefulness of EUV lithography for commercial IC fabs?

We really have to hope that EUVL will be successful, but I personally have grave doubts. To me it looks like a re-run of the 1X synchrotron X-ray debacle, where the R&D managed to stay just behind the leading edge of established technologies for several Moore’s Law generations. That enabled nice long R&D careers for many X-ray promoters, but harmed the industry as a whole.

EUVL is very, very hard technically and the business case has never been worked out for the whole food chain. Key bits of the infrastructure lag badly due to underinvestment and other parts are prohibitively expensive. My guess is that EUVL will be used for some classes of chips, but not widely and not profitably. The roll-out will be slow and the throughput per dollar will be low. Innovators will be able to side-step the limitations of other technologies before EUVL gets its act together. For example, Intel and Micron are making 25nm half-pitch NAND flash memories today. I think it is being done with sidewall double patterning, which is way cheaper than EUVL. If they can use that technology to make logic or DRAM, it is game over!

You’ve famously summarized one the fundamental principles of PSM as “there is no wavelength of darkness;” could you elaborate upon this principle for those of us who do not engineer with photons?

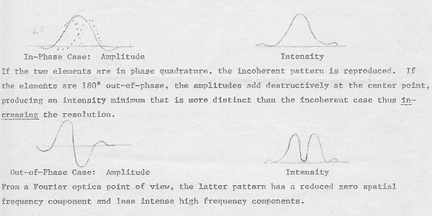

Everyone has heard of the “wavelength of light” and most engineers know that it sets a fundamental limit for the resolution of optical instruments, but the usual Rayleigh Criterion only applies to bright features. Criteria based on Fourier optics apply to gratings of bright and dark, so you hear about “pitch” and “half-pitch.” However, the size of the individual dark features between the bright ones is not set by the wavelength. Rather it depends on the criterion you set for the transition between dark and light (see figure from original 1980 patent application). Destructive interference in an image can make a line or spot of zero light intensity, but it has zero width. It you set the criterion for the edge of the dark line to be 10% or 20% or 50% of the maximum intensity, you get different widths. By the way, that was known to Lord Rayleigh. In practice, that means that overexposing photoresist can make very narrow lines.

When you first conceived that “there is no wavelength of darkness,” did it come as a pure conceptual abstraction or did you somehow visualize spatial/temporal phenomena?

When you first conceived that “there is no wavelength of darkness,” did it come as a pure conceptual abstraction or did you somehow visualize spatial/temporal phenomena?

Actually it was just a rhetorical flourish, based the need to get people thinking differently. By then we had done some dose-focus experiments and saw really extraordinarily narrow resist features, which unfortunately had all fallen over! I had calculated the minimum half pitch possible, which corresponds to a k1 factor of 0.25 – less than half the Rayleigh resolution. However, the dark lines were much narrower than that “half pitch!” The bright lines were wider.

Now, in practice, the width of the dark line is set by the stray light in the image – the so called “flare” which degrades CD control. Good lenses have low “flare” and a dark-field alt-PSM layout (as used by Numerical Technologies (bought by Synopsys) minimizes flare. So the way to reduce the dark CD is not to go to shorter wavelength but to better lens quality and design. Interestingly, the microscopists have developed a means of combating the effect of flare using 2 wavelengths of light, one that excites and one that de-excites molecules. They get 28nm images with visible light! Can we do that?

What is your favorite sub-atomic “thingie” (i.e., wave/particle/phenomenon), and what’s it feel like to explore the extreme limits of empiric knowledge?

My favorite thingie right now is back action evading measurement or quantum nondemolition measurement (QND). Those methods allow you to measure the very vacuum fluctuations and predict quantum results! It may not be as cool as teleportation or Bose-Einstein condensates, but it is a bit easier!

Scientific research is really hard at the frontier. You are at the limits of your own knowledge, everyone else’s knowledge (whether they admit it or not) and available technology. The theory and equipment generally doesn’t work and has to be fixed. When you solve one problem, you generally uncover two more, and that goes on and on. To layer politics and funding concerns on top of that as well as the need to keep predatory bosses at bay, well that just makes it too hard to endure forever.

Scientific research is really hard at the frontier. You are at the limits of your own knowledge, everyone else’s knowledge (whether they admit it or not) and available technology. The theory and equipment generally doesn’t work and has to be fixed. When you solve one problem, you generally uncover two more, and that goes on and on. To layer politics and funding concerns on top of that as well as the need to keep predatory bosses at bay, well that just makes it too hard to endure forever.

But eventually if you are persistent and lucky, the last problem gets solved and it all works. That feels gratifying personally, but then you have to tell someone. If what you have done illustrates an important issue or seems useful in technology, it is very disappointing to see your result relegated to obscurity. It is even worse to be denied the resources to do anything else because you have already had a “success.” I am glad that I did manage to publish most of the work, but generally someone else published the same general thing at about the same time! A lot of it has now been independently re-discovered 20 and 30 years later.

So, recognition like this Frits Zernike award feels very gratifying!

Do you find Walborn’s 2002 “quantum erasure” experiment to provide conclusive evidence in support of Bell’s Theorem, why, and what are the ramifications for what we (and Einstein) like to call “reality”?

Alain Aspect authoritatively disproved local objective realism 20+ years ago. I have some quibbles with the quantum erasure and wechtel weg experiments, but they illustrate a valid point: quantum mechanics is about information that you have (or should have) about a system and nothing more – certainly not “reality.” Our universe has in it nonmaterial correlations that can appear to violate all reason. Sorry, but that is the way it is!

The nonlocal correlations seen in the Bell’s inequality demonstrations emerge only from non-local experiments. If all you have is the local information from a photon detector at your location, you get a meaningless random stream of polarization data. So does your collaborator at the other side of the universe. However, if you get together later and compare notes, you find the photon polarizations at the two detectors are more closely correlated with one another than they can be to any common source. Those are the EPR-Bell correlations. However, that is a non-local experiment and it should not be surprising that a non-local property emerges. Entanglement gets stranger with 3 particles. Check out the Greenberger-Horne-Zeilinger (GHZ) experiments!

Personally, I regard it as a bug in the operating system of the universe!

-E.K. and M.D.L.